Specific Challenge Tasks

For the challenge, we focus on the following tasks.

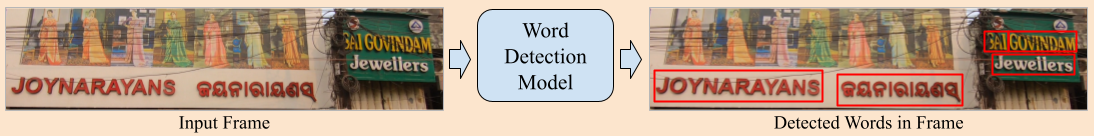

Participant methods should detect text words across multiple scripts in this task. The input consists of scene images containing text words in various languages, with detection required at word level. The following figure shows the proposed task.

The F-score is the metric used for ranking participants methods. It is based on combination of recall and precision of the detected word bounding boxes compared to ground truth. A detection is considered correct (true positive) if its bounding box has over 50% overlap (intersection over union) with the ground truth box.

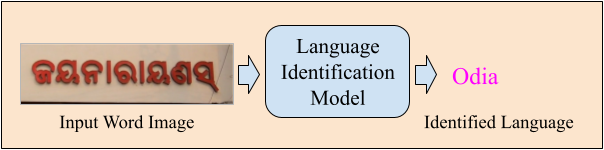

Our dataset images contain text in 11 languages — Bengali, English, Gujarati, Hindi, Kannada, Malayalam, Marathi, Oriya, Punjabi, Tamil, and Telugu. Punctuation and certain math symbols also appear as standalone words and are assigned a unique language class called Symbol. This results in a total of 12 distinct languages. So it becomes a 12 class classification problem. Words labeled with a mixed language and all don’t care words, regardless of language identification, have been excluded from this task. The goal of this task, given a cropped word image, model needs to predict the language of that word image. Following figure presents the competition task. The following figure highlights the proposed task.

This metric compares participants’ language ID predictions for each word image with the ground truth. If a prediction is correct, it is counted; the overall accuracy of a method is the ratio of correct predictions to the total. Let \(G = {g1,\ g2,\ ...\ gn}\) represent the ground truth language classes, and \(T = {t1,\ t2,\ ...\ tn}\) the language classes predicted by a given method, with \(g_i\) and \(t_i\) corresponding to the same image. A script identification is correct (1) if \(g_i = t_i\); otherwise, it is incorrect (0). The overall accuracy is calculated as the sum of correct identifications divided by \(N\). It is mathamatically reprsented as \(Acc = \frac{S}{N}\).

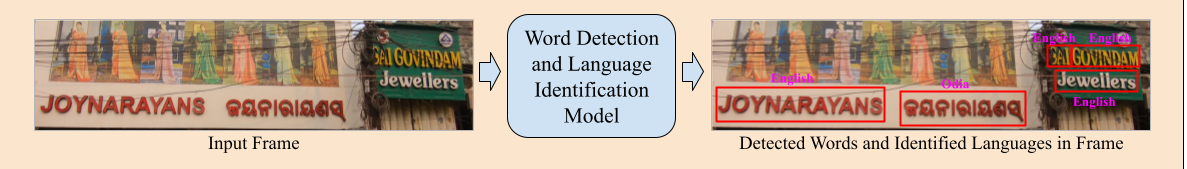

A participant method should take a full scene image as input, detect the bounding boxes for each word, and identify the language id for each word. The following figure explains the task.

The evaluation of this task combines accurate word detection and correct language identification. A result is counted as correct if the word bounding box meets Task-A’s detection criteria and the language for this detected word matches Task-B’s identification criteria.

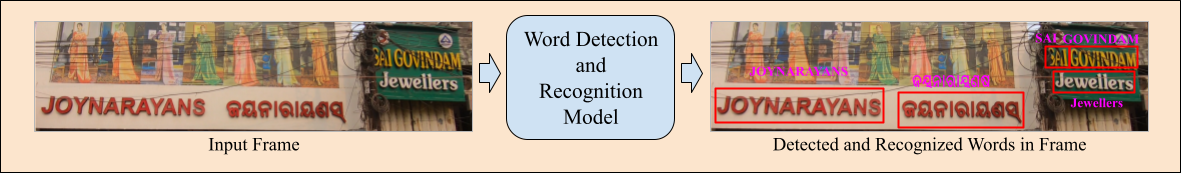

The end-to-end scene text detection and recognition in a multi-lingual setting aligns with similar English tasks: given an input scene image, the goal is to predict bounding boxes and provide their corresponding transcriptions. The following figure shows the task.

The evaluation for this task combines accurate word detection and recognition. A result is considered correct if the word bounding box meets Task-A’s detection criteria and its predicted transcription is correct. In such cases, the joint detection and recognition of the word is counted as correct. For recognition, we use two famous evaluation metrics, Character Error Rate (CER) and Word Error Rate (WER), to evaluate the performance of the submitted methods. Error Rate (ER) is defined as $${ER = \frac{(S + D + I)}{N}, (1)}$$ where \(S\) indicates the number of substitutions, \(D\) indicates the number of deletions, \(I\) indicates the number of insertions, and \(N\) number of instances in reference text. In the case of CER, Eq. 1 operates on character level, and in the case of WER, Eq. 1 operates on word level.